Qwen3.5

Qwen3.5

Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency

Memory Requirements

To run the smallest Qwen3.5, you need at least 2 GB of RAM. The largest one may require up to 21 GB.

Capabilities

Qwen3.5 models support tool use, vision input, and reasoning. They are available in gguf.

About Qwen3.5

Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

The models come in a few sizes, in both dense and sparse variants. For edge devices, try out the dense Qwen3.5-9B or the Qwen3.5-35B-A3B MoE.

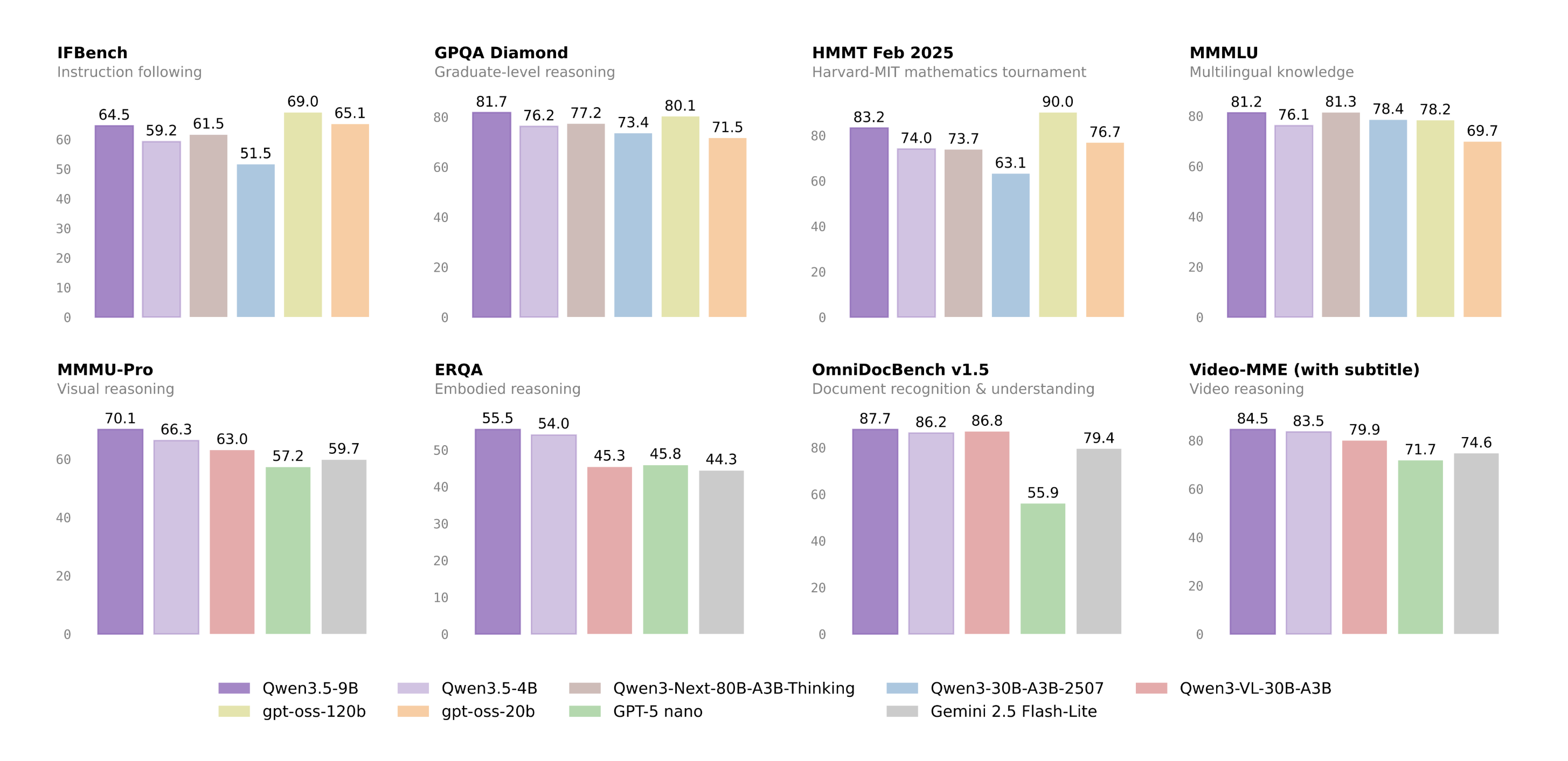

Benchmarks

Qwen3.5 models perform exceptionally strongly in benchmarks. Qwen3.5-35B-A3B surpasses Qwen3-235B-A22B-2507 and Qwen3-VL-235B-A22B — a reminder that better architecture, data quality, and RL can move intelligence forward, not just bigger parameter counts - Alibaba Qwen says.

Qwen3.5-122B-A10B and 27B continue narrowing the gap between medium-sized and frontier models — especially in more complex agent scenarios.

License

Qwen3.5 models are available under Apache 2.0