Codex

Use Codex with LM Studio

Codex can talk to LM Studio via the OpenAI-compatible POST /v1/responses endpoint.

See: OpenAI-compatible Responses endpoint.

Pro Tip

Have a powerful LLM rig? Use LM Link to run Codex from your laptop while the model runs on your rig.

Setup

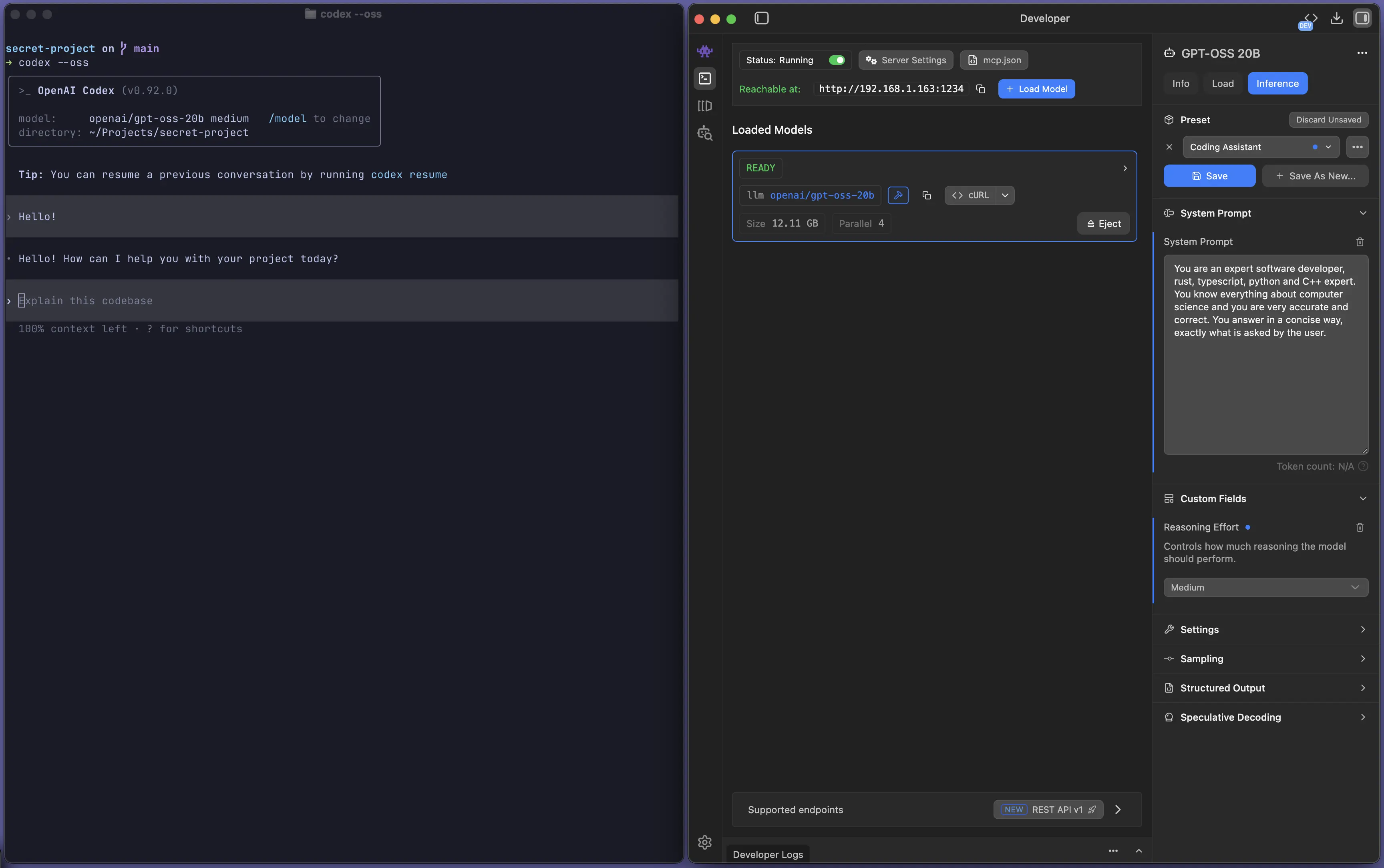

Start LM Studio's local server

Make sure LM Studio is running as a server (default port 1234).

You can start it from the app, or from the terminal with lms:

lms server start --port 1234Run Codex against a local model

Run Codex as you normally would, but with the --oss flag to point it to LM Studio.

Example:

codex --ossBy default, Codex will download and use openai/gpt-oss-20b.

Pro Tip

Use a model (and server/model settings) with more than ~25k context length. Tools like Codex can consume a lot of context.

You can also use any other model you have available in LM Studio. For example:

codex --oss -m ibm/granite-4-microIf you're running into trouble, hop onto our Discord